SaaS MVP Development in 2026: The Engineering Decisions That Decide Whether You Ship or Sink

A senior engineer's framework for SaaS MVP development in 2026. Stack choices, architecture trade-offs, build-vs-buy decisions, AWS infrastructure, and the engineering calls that distinguish a startup that ships from one that does not.

16 min read

Every "How to build a SaaS MVP in 2026" article you find on Google reads like the same listicle: pick a JavaScript framework, deploy on Vercel, use Stripe for payments, ship in 4 weeks, congratulations. The startups that actually ship MVPs do not look like this. The startups that ship and survive look like this even less. The decisions that decide whether you have a product in 18 months or a write-off are not framework choices; they are architectural, operational, and strategic choices that most "MVP guide" content actively avoids because the answers are uncomfortable.

This post is the framework we work through with founders and CTOs at Sentinu when we scope a SaaS MVP build. It is the conversation that happens before the Figma file is opened and the GitHub repo is created. The teams that have this conversation upfront ship MVPs that survive the first contact with paying customers. The teams that skip it usually rebuild from scratch within 18 months, which is the most expensive way to learn that an MVP is an engineering decision, not just a product decision.

The MVP that ships versus the MVP that sinks

After two decades watching SaaS MVPs land in the market, the pattern is consistent. The ones that ship and grow have five things in common. The ones that fail (in our experience) usually missed at least three.

They shipped one workflow end to end, not five workflows half-built. The successful MVP solves one specific problem completely for one specific user. Sign up, do the thing, see the result, get the value. The failed MVPs typically have a half-built dashboard, a half-built billing flow, a half-built integration, a half-built admin panel, and none of them work well enough to actually sell.

They had a working data model before they had a working frontend. The schema design that anchors what users, accounts, plans, and events look like in the database was settled before the UI was built. The MVPs that rebuild from scratch usually rebuild because the original data model could not represent the business model that emerged from customer conversations.

They picked boring technology choices. Postgres, not the latest vector database. Node or Python or Go, not the new language. AWS or Vercel, not the just-launched cloud provider. The successful MVPs spend their innovation budget on the product, not on the infrastructure.

They built in instrumentation from week one. The MVP that shipped with logging, error tracking, and basic analytics gets to "we know which features customers use" within two months. The one that did not gets there in month nine or never.

They had honest cost transparency. The MVPs that survive know what their AWS bill will be at 10 customers, 100, and 1,000. The ones that fail are surprised by a $4K cloud bill in month six and have to pause feature work for a month to fix it.

These are not framework choices. They are operating choices. The framework is downstream.

The five engineering decisions that decide the outcome

Decision 1: monolith or microservices

The strongest correlate of MVP failure we have seen is starting with microservices for a 2-person team building a product nobody has paid for yet. The architecture overhead is real and it kills velocity.

For an MVP, the right answer is almost always a monolith. One application, one repo, one deploy. The product can become a distributed system later, after you know what the actual service boundaries are. Service boundaries chosen too early are almost always wrong because the business model has not stabilized.

The exception: an MVP that is fundamentally about integrating multiple external systems (e.g., a B2B middleware product) often justifies a single integration service alongside the main app from day one. But that is not microservices; that is two services. Two services are not microservices.

We have seen teams ship MVPs as monoliths and migrate to microservices at 1,000 customers, and the migration was a 6-month project. We have seen teams ship MVPs as microservices and shut down before reaching 100 customers because the team could not move fast enough. The math favors the monolith for MVP-stage startups.

Decision 2: which database, and how to model the data

Postgres. Almost always Postgres. The reasons are not exciting: it is mature, it scales further than you will need for the MVP, the tooling is excellent, the hiring pool is large, and the failure modes are well-understood.

The interesting decision is not which database; it is how to model the multi-tenancy. Three common patterns:

Shared schema, tenant ID column. Every table has a tenant_id column. Every query filters by it. Simplest to operate, hardest to get wrong (a missing WHERE tenant_id = ? is a data leak). Use Row-Level Security in Postgres to enforce this at the database layer, not just in application code. Right answer for most B2B SaaS MVPs.

Schema-per-tenant. Each tenant gets a Postgres schema. Better isolation, more complex operations (migrations, backups, schema changes scale linearly with tenant count). Use this when you have a small number of large tenants (enterprise SaaS) rather than a large number of small ones.

Database-per-tenant. Each tenant gets a separate database (or RDS instance). Maximum isolation, maximum operational complexity. Use this only when regulatory or contractual requirements force it (regulated industries, data sovereignty per tenant). Most MVPs should not start here.

We typically ship MVPs on the shared-schema pattern with Row-Level Security. It scales to thousands of tenants, the operational story is straightforward, and the failure modes are documented.

Decision 3: where to host

The hosting decision affects both initial cost and migration cost. Both matter.

Vercel or Netlify for the frontend, AWS Lambda or Render for the backend, RDS or Supabase for the database. The "modern SaaS" stack. Fast to start, expensive at scale (Vercel function pricing bites past a certain point), opinionated. Right for content-heavy MVPs and frontend-heavy products.

AWS end to end (EC2 or ECS or Lambda, RDS, S3, CloudFront). More flexibility, more operational responsibility. The platform that scales the longest. Right when you have someone on the team comfortable with AWS or you are deploying alongside other AWS workloads.

Fly.io or Railway or Render. The "AWS for people who do not want AWS" tier. Easier than raw AWS, more flexible than Vercel. Good middle ground for teams without dedicated DevOps capacity. We deploy here for clients who want self-hosting flexibility without managing the underlying infrastructure.

On-premise or sovereign cloud (OVH, Scaleway, Hetzner). Right for European clients with strict GDPR requirements or French clients who specifically want EU-hosted infrastructure. Same architectural pattern as our self-hosted n8n on AWS work; the principles translate to the SaaS use case.

The cost trajectory matters more than the absolute month-one cost. We have seen MVPs on Vercel that worked great until month 10 when the bill hit $4K/month for a product generating $8K/month in revenue. The migration cost away from Vercel at that point was higher than the migration cost would have been if they had started on Render.

Decision 4: authentication, billing, and the build-vs-buy on infrastructure

The MVPs that ship faster make aggressive build-vs-buy decisions on infrastructure features. The ones that ship slower try to build everything themselves.

Authentication. Buy. Auth0, Clerk, Supabase Auth, AWS Cognito, WorkOS for enterprise SSO. Building auth from scratch for an MVP is a 4-week project that wastes time you do not have. The cost is real ($50 to $500/month at MVP scale) but the time savings is much larger.

Billing. Buy. Stripe is the obvious choice, with Stripe Billing handling subscriptions, invoicing, and tax for most B2B SaaS. The exceptions: products with complex usage-based billing where Lago or Orb may fit better, or products that need extensive accounting integration where a more flexible tool than Stripe might be required.

Email transactional. Buy. SendGrid, Postmark, Resend, AWS SES. The deliverability infrastructure is not something to build. Pick one, configure SPF/DKIM/DMARC, move on.

Search. Mixed. Postgres full-text search handles the MVP for most products. Algolia, Meilisearch, or Typesense are right when you genuinely need ranked search across millions of records and the Postgres approach is not fast enough.

File storage. Buy. S3 or Cloudflare R2. No exceptions.

Background jobs. Mixed. A simple cron-like setup with the database as the queue (using SELECT FOR UPDATE SKIP LOCKED) handles the MVP. Move to a real queue (SQS, Sidekiq, BullMQ) when you actually need it, usually around the time you have multiple workers running.

The pattern: every commodity feature is "buy" until you have a specific reason to build. The exceptions are the features that are core to your product's differentiation, which you must build because they are the product.

Decision 5: instrumentation from day one

Every MVP should ship with three things instrumented before customer #1 logs in:

Application performance monitoring. Sentry, Honeycomb, Datadog, or a similar tool. You need to know when errors happen and which user encountered them. Cheap at MVP scale ($25 to $100/month). Invaluable when a customer complains and you can show them the exact error trace.

Product analytics. Mixpanel, PostHog, Amplitude. Event-level tracking of what users do in the product. You will never make the right product decisions if you do not know which features users actually use. PostHog is open-source and self-hostable, which is the path we typically take for clients with data sovereignty requirements.

Business metrics dashboard. A simple dashboard (often Looker Studio, Metabase, or Retool) showing MRR, active users, churn, and the 5 to 10 metrics that matter to the business. We covered the BI architecture for Shopify earlier this month in our Looker Studio + BigQuery post; the same patterns apply to SaaS.

The MVPs that ship without instrumentation rebuild it under pressure when investors ask for metrics. Build it from week one.

⚠️

The most common MVP failure pattern we see is "we will instrument it later." Later is never a good time because there is always something urgent. Three months in, the team does not know which features customers use, churn is happening invisibly, and the cofounder meeting becomes a debate about what to build next based on opinions rather than data. Instrument from week one. The cost is trivial compared to the cost of flying blind.

The decision framework

For scoping a SaaS MVP build, we score the project on four axes:

- Product complexity: novel workflow with unclear data model (3), complex but well-understood domain (1), simple CRUD-like product (0)?

- Team technical depth: experienced senior engineers (3), one experienced engineer plus juniors (1), no engineers, first-time founder (0)?

- Time pressure: hard 6-month deadline (3), 9 to 12 months (1), flexible (0)?

- Regulatory or data-sovereignty constraints: regulated industry or strict EU residency (3), some compliance needs (1), none (0)?

Score 0 to 3: A no-code or low-code starter (Bubble, Retool, Glide) may ship faster than a coded MVP. The product is simple enough, the team's engineering is not strong enough, and the constraints are light enough that custom code is overkill.

Score 4 to 7: Standard SaaS MVP stack. Next.js or Remix for the frontend, Node or Python for the backend, Postgres, Vercel or Render for hosting, Stripe for billing, Auth0 or Clerk for auth. Ship in 8 to 14 weeks. This is the band where most of our SaaS MVP engagements live.

Score 8 to 12: Custom architecture, likely AWS-hosted with infrastructure-as-code, considered data model, and explicit data sovereignty controls. Build runs 4 to 8 months. The complexity justifies the engineering investment because the product is genuinely novel or the compliance requirements are non-negotiable.

This is a heuristic, not a verdict. We have shipped MVPs in 6 weeks for clients scoring 8 (when the team was very senior and the scope was tight) and 6 months for clients scoring 4 (when the product evolved during the build).

What an MVP build actually looks like

For a typical SaaS MVP build at Sentinu, the engagement breaks into:

Weeks 1 to 2: discovery and architecture. Map the user workflows. Define the data model (this is the single most important week of the project). Pick the stack decisions discussed above. Set up the development environment, CI/CD pipeline, and initial infrastructure.

Weeks 3 to 6: core build. The one workflow that defines the product. Sign-up, the primary user action, the result, the path back. Authentication, billing, the data model. Everything else is deferred.

Weeks 6 to 9: secondary workflows. Admin panel, settings, account management, the second and third user workflows. By this point, the primary workflow is being used by internal testers and the team is iterating.

Weeks 9 to 12: beta and launch. First paying customers. Bug fixes, performance work, the inevitable "we discovered the data model needs one more field" moments. Production deployment with monitoring, alerting, on-call rotation.

Weeks 12 onwards: operate and iterate. The MVP is live. Customer feedback drives the roadmap. The team's job shifts from building to listening and iterating.

A focused MVP at our pace ships in 10 to 14 weeks. The variability is in scope, not in the platform. The MVPs that take longer typically tried to ship too much in the initial scope rather than deferring to a v1.1.

What this costs

Honest cost ranges for SaaS MVP development engagements:

| Project shape | Total engineering cost | Monthly infrastructure |

|---|---|---|

| Standard SaaS MVP, one core workflow, common stack | $40K to $90K | $100 to $400 |

| MVP with one external integration (Shopify, HubSpot, Salesforce) | $60K to $120K | $150 to $500 |

| MVP with compliance constraints (RGPD, HIPAA-light) | $80K to $180K | $300 to $800 |

| Enterprise-grade MVP with SSO, audit logging, multi-tenant isolation | $120K to $300K | $500 to $2,000 |

The infrastructure cost is the part founders consistently underestimate. A Vercel-hosted MVP at $40/month in week one is at $400/month at 50 customers and $2,000/month at 500. The migration cost away from a hosting platform you chose for development convenience can be $20K to $60K depending on architecture. The platform choice in week 1 has consequences in month 24.

When to skip the MVP and validate differently

The honest counter-case. Not every product idea needs a coded MVP.

Validate the demand first. If you have not had 20 conversations with potential customers who said they would pay for the product, you do not need an MVP. You need more conversations. The MVPs that fail most consistently are the ones built before product-market fit was even hypothesized.

Concierge MVP. Deliver the value manually to the first 5 to 10 customers before automating it. If you cannot deliver the value manually, the product is not solving the right problem. If you can, you have proof that the automation is worth building.

Landing page test. A waitlist landing page with a clear value proposition and 200 sign-ups in 30 days is more valuable than an MVP built in isolation. We have advised founders to delay MVP builds for 8 weeks while they validated demand, and the resulting MVP shipped faster and survived longer.

We genuinely turn away MVP engagements when we think the founder needs to validate first rather than build. The conversion from "wants an MVP" to "should build an MVP" is around 70 percent of inquiries we receive.

What we ship at Sentinu

Recent SaaS MVP engagements illustrate the range:

- A French B2B compliance tool, Next.js + Node.js + Postgres on Scaleway (EU residency was non-negotiable). 14-week build. Live with 6 enterprise customers in month one.

- A UK fintech MVP, Remix + Postgres on Fly.io, with extensive Stripe Connect integration for marketplace billing. 16-week build, 3 months of pre-MVP validation work.

- A Canadian B2B SaaS for the construction industry, Next.js + Python (Django) + Postgres on AWS, with offline-first mobile companion app. 22-week build, 8 customers from a 50-customer waitlist established before development began.

The pattern: the MVPs that worked had founders who knew exactly which workflow they were building, validated demand before development, and treated the engineering as the most strategic decision of the early company. The ones we declined typically had founders who were excited about a product idea but had not yet earned the right to build it.

FAQ

Should I use TypeScript or plain JavaScript for the MVP?

TypeScript, for any project expected to live more than 6 months. The startup cost is small (a few extra hours of setup) and the productivity gain from caught bugs and refactoring confidence compounds heavily. The exceptions are very small MVPs (under 5,000 lines of code) where the type-checking overhead is not justified, but most MVPs grow past that.

What about AI-generated code or AI pair programming?

Use it. AI tools (Cursor, Windsurf, Claude Code, GitHub Copilot) genuinely accelerate MVP development for the experienced engineer who knows when to accept the output and when to override it. The trap is junior engineers shipping AI-generated code they do not understand, which creates a maintainability liability. As a productivity multiplier for senior engineers, AI tools are now standard. As a substitute for senior engineering judgment, they are not.

How much should the MVP cost compared to my fundraising?

Rule of thumb: MVP development cost should be no more than 30 to 40 percent of your seed runway, leaving budget for the 6 to 12 months of post-MVP iteration that determines product-market fit. An MVP that consumes 70 percent of seed funding leaves the company unable to iterate after launch, which is when iteration matters most.

Build with a freelancer or an agency?

For an MVP, the deciding factor is engineering depth and continuity. A senior freelancer with a documented MVP track record can deliver an MVP comparable to an agency, often at lower cost. The risk is the 12-month post-MVP period when bugs need fixing and iterations need shipping. If the freelancer becomes unavailable, the MVP often gets rebuilt. Agencies offer succession and team continuity at a higher cost. The right answer depends on your tolerance for that risk.

What if my MVP needs to be live in 6 weeks?

The realistic shape of a 6-week MVP: one workflow, one user type, no admin panel, no multi-tenancy beyond the basics, no analytics beyond the most basic. Most products cannot fit into 6 weeks honestly. The ones that can are products where the workflow is genuinely simple (a content scheduler, a basic form-to-database app, a Slack integration). Founders who think their product fits into 6 weeks usually have not yet seen the data model complexity that emerges from real customer needs.

How does this connect to your Shopify work?

Many of our SaaS MVPs are tools that integrate with Shopify (inventory tools, post-purchase apps, B2B portals). The Shopify integration work covered in our Shopify NetSuite integration post and Custom App vs Public App post is the same engineering pattern: API integration, webhook handling, data model design. The principles transfer cleanly.

Should the MVP be open source or proprietary?

Almost always proprietary for the MVP. Open source is a strategic decision (community building, developer adoption, freemium business model) that should be made deliberately, not by default. Most SaaS MVPs benefit from being proprietary while the product-market fit is being figured out; open source becomes an option later once the value proposition is clearer.

Where to take this next

If you are scoping a SaaS MVP build and the framework above narrowed the decision space but you want a partner to ship the build, that is what our SaaS and web app development practice covers. We scope, architect, and ship MVPs where the validation work has been done and the engineering decisions are the next bottleneck. If the build also involves integration with Shopify or other commerce platforms, our API and system integration practice is the companion. And if the conversation is still in "validate first" territory, we will say so and recommend the validation work before the engineering.

Related Topics

Related posts

View all articles

Shopify DevelopmentMay 12, 2026

Shopify Scripts Are Dead in 48 Days: The Functions Migration Playbook for Plus Stores

June 30, 2026 is a hard wall. Scripts editing is already locked. If your checkout discounts, shipping rules, or payment logic still run on Scripts, here is the migration playbook, the failure modes, and what it costs.

13 min read

Technical SEOApr 30, 2026

Programmatic SEO in 2026: When It Still Works, When Google Penalizes It, and How to Tell the Difference

Programmatic SEO is not dead. It is just no longer easy. Here is the framework we use in 2026 to decide whether a programmatic SEO project will compound traffic or get nuked by the Helpful Content system in six months.

14 min read

Shopify DevelopmentApr 21, 2026

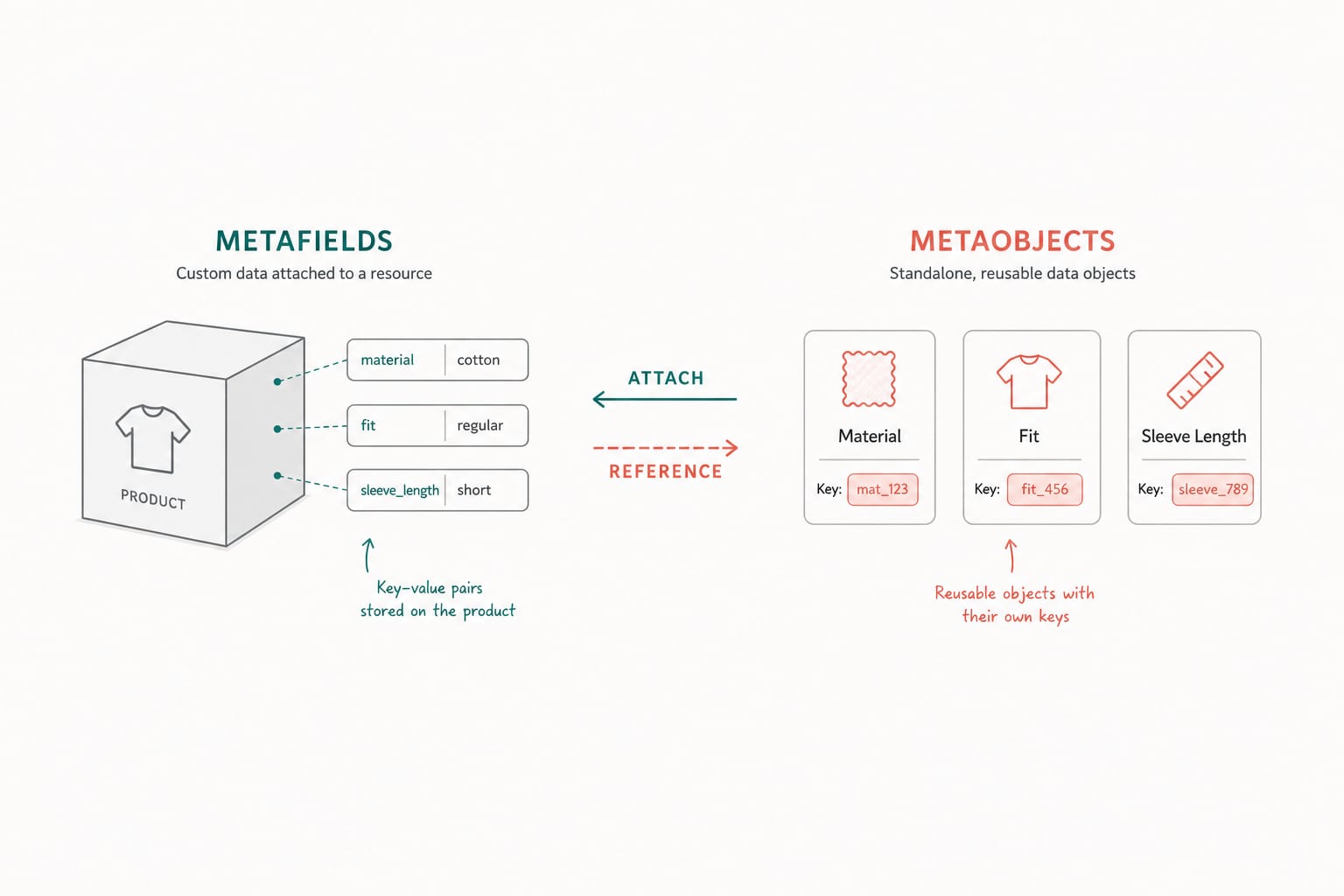

Shopify Metaobjects and Metafields: A Developer's Guide to Structured Content in 2026

Metafields attach data to existing resources. Metaobjects are standalone records you can reference anywhere. Here is when to use which, how to model them, and the API patterns that scale across thousands of products.

12 min read