Programmatic SEO in 2026: When It Still Works, When Google Penalizes It, and How to Tell the Difference

Programmatic SEO is not dead. It is just no longer easy. Here is the framework we use in 2026 to decide whether a programmatic SEO project will compound traffic or get nuked by the Helpful Content system in six months.

14 min read

Programmatic SEO had a brutal 2024 and a complicated 2025. Site after site published tens of thousands of templated pages, ranked beautifully for six months, and then got nuked by a Helpful Content update or the March 2024 spam policies. The reaction was an industry-wide pendulum swing: "programmatic SEO is dead," "Google killed it," "do real content instead."

That take is wrong. The math is more interesting.

Programmatic SEO still works in 2026. It is just no longer easy. The pages that survive Google's updates and get cited by AI agents share a small set of structural properties, and the pages that get destroyed share an equally identifiable set. The difference is not how many pages you publish. It is what data sits underneath them.

This post is the framework we use when a client asks "should we build a programmatic SEO project?" Some of those projects we take on. Most we recommend against. The decision is rarely about ambition or budget. It is about whether the underlying data justifies the pages or not.

What programmatic SEO actually is

The term is used loosely. Let us tighten it.

Programmatic SEO is the practice of generating many pages from a structured dataset and a template, where each page targets a long-tail query that maps to a row or combination of rows in that dataset. The classic shapes are [product] in [city], [tool] vs [tool], [job title] salary in [country], best [thing] for [use case], and how to [task] in [software].

The reason it works at all is that long-tail queries collectively represent the majority of search volume but each individual query has too little volume for a human-written article to be economical. If you can serve 50,000 long-tail queries with one template and one dataset, you have built a content asset that no manual content team can match on cost.

The reason it fails is that the same property, many pages from one template l,ooks identical to scaled spam unless the pages are differentiated by genuinely useful, query-specific data. Google's spam policies in 2024 and 2025 explicitly named "scaled content abuse" as a category, and the Helpful Content system was retrained to identify pages that exist primarily for search engines rather than people. Programmatic SEO without underlying value is exactly that pattern.

The six things that decide whether your project survives

Run a programmatic SEO project past these six tests before writing a single line of code. We have audited enough post-mortems to know that projects that pass all six survive Google updates and AI agent scrutiny. Projects that fail two or more get filtered out within twelve months.

Test 1: Does each page contain data a user could not get elsewhere in one query?

This is the single most important test. If your [restaurant] in [neighborhood] page is a list of restaurant names with hours pulled from Google Maps, the user could have stayed on Google Maps. The page has no reason to exist.

If your page includes the average price per cover by neighborhood, the cuisine distribution, the percentage of restaurants open after 11pm, and links to make a reservation, the user got something they could not have assembled in one query. That is the difference between scaled content and a useful directory.

Tighten the test: imagine the user has a Google search bar open. If they could answer their question with one search that does not lead to your page, your page is providing zero marginal value. Google's algorithm makes the same judgment.

Test 2: Is the data fresh, or is it frozen in time?

Pages whose data was scraped once in 2022 and never updated are easy to identify. The product prices are wrong, the company is now under a different name, the law referenced changed, the population number is from the last census two cycles ago. AI agents catch this faster than Google does because they cross-reference against multiple sources.

A programmatic SEO project that does not have a refresh pipeline as part of the architecture is not a project. It is content debt. The refresh cadence depends on what changes: weekly for pricing pages, monthly for product comparisons, quarterly for industry stats, annually for evergreen attribute pages.

Test 3: Do enough pages have meaningful, page-specific content beyond the template?

The template provides the consistent structure. The data provides the differentiation. But the template-plus-data alone is rarely enough. Pages that rank in 2026 typically also have:

- A page-specific summary or analysis (which can be generated, but must be specific to that page's data, not boilerplate)

- Examples or scenarios specific to the page's combination of variables

- A way for users to take a meaningful action from the page

The bar is roughly that 30% to 50% of the words on each page should be specific to that page rather than shared with every other page in the set. Templates with 90% shared content get filtered.

Test 4: Is the URL structure clean and stable?

Programmatic projects often start with messy URLs. Parameters, dynamic IDs, weird casing. Long-term these are toxic. The URL structure should be:

- Lowercase, hyphenated, no parameters

- Hierarchical when there is a real hierarchy (

/jobs/software-engineer/paris) - Flat when there is not (

/saas-tools/email-marketing-vs-marketing-automation) - Stable. Pages that change URL on every regeneration lose all their link equity.

The decision to make URLs hierarchical or flat is not aesthetic. It depends on whether users browse by the hierarchy. If users navigate from /jobs to /jobs/software-engineer to /jobs/software-engineer/paris, the hierarchy is real. If they only land on the leaf page, the hierarchy is theater.

Test 5: Do the pages link to each other in a useful graph, not a spam graph?

Internal linking on programmatic SEO sites is the easiest tell of low-quality intent. A page about "Italian restaurants in the 11th arrondissement" should link to "Italian restaurants in nearby arrondissements" and "Italian restaurants in similar price ranges in the 11th," not to 47 unrelated pages stuffed in a footer.

The rule we use: the links from a page should be the links a user on that page might actually want. If the link target is not something a real user would click, neither will Google count it as an endorsement.

Test 6: Is the underlying business sustainable without this traffic?

This is the test most teams skip and the one that matters most for risk management. Programmatic SEO traffic can compound for years and then disappear in a single update. If your business model collapses without it, you are building on sand.

The healthy pattern is: the programmatic pages bring users in, the product converts them, the product creates value that does not depend on continued search visibility. Customer success, retention, expansion, word of mouth, all of these have to work independently. Programmatic SEO is then a customer acquisition channel, not the business.

We have seen the failure pattern enough times to call it: a startup builds 50,000 programmatic pages, ranks for 200,000 long-tail queries, converts at 0.8%, builds a business on top of the traffic, and a Google update in month 18 wipes 70% of it. They never recover because the acquisition channel was the business.

The three shapes that still work

Across the projects we have audited that survived 2024 and 2025 and are still compounding into 2026, three shapes recur. If your project does not fit one of these, the bar is much higher and the failure rate is much higher.

Shape A: Authoritative directory backed by proprietary data

A directory that lists entities (restaurants, lawyers, software, courses, properties) where the data is curated, verified, kept fresh, and includes attributes that are not trivially available elsewhere. Examples that have survived: legal directories with verified bar admissions, software directories with normalized pricing and integration data, real estate platforms with consolidated multi-broker data.

The defensibility comes from the data, not the SEO. The SEO compounds because the data is genuinely useful.

Shape B: Comparison and decision-support pages with real reasoning

[Tool A] vs [Tool B] pages work when they actually compare. The page needs criteria (specific to the category), evaluations against each criterion (specific to each tool), and a verdict (specific to use cases). The template provides the structure. The content per cell is hand-curated or, increasingly, generated from a structured database with human review.

The failure mode is [Tool A] vs [Tool B] pages where the comparison is empty: each cell says "supports email," "has integrations," "user-friendly." Pages like this fail every test above and get filtered.

Shape C: Local pages backed by a real local service

[Service] in [city] works when the service is actually delivered in the city. A plumbing company with technicians in 40 French cities can credibly run 40 local pages. A SaaS that ships from one office in Paris cannot credibly run 200 location pages, and Google has been efficient at distinguishing the two since 2024.

The test is whether someone in the listed city, ringing the phone number on the page, would get served. If yes, the page has reason to exist. If no, you are pretending and the algorithm will eventually catch up.

What changed in 2024-2025 (and what is different in 2026)

For context on why so many programmatic projects died, here is the short version.

Google's March 2024 core update explicitly targeted scaled content abuse. The policy update made it possible for Google to act against sites that "produce content at scale primarily for search engine rankings, regardless of whether AI, automation, or humans are involved." Many programmatic SEO sites that had ranked for years were demoted within weeks.

The Helpful Content system, which had been a separate signal, was integrated into the core ranking algorithm in the same release. This made it impossible to recover quickly: previously a site could lose helpful content scoring, fix the issue, and recover in a future refresh. Once it was core, the recovery path became "rebuild whatever Google's broader signal is unhappy about," which is murkier.

Through 2025, the AI search surfaces (ChatGPT, Perplexity, Google AI Overviews, Microsoft Copilot) added a second filter. AI agents cite sources, and they prefer sources with depth, specificity, and verifiable claims. Thin programmatic pages get filtered out of AI citations even when they technically rank in classical Google.

In 2026, the bar is higher than ever. The good news is that the bar is consistent. Pages that provide genuine value to a query are visible across Google classical, Google AI Mode, ChatGPT, and Copilot simultaneously. Pages that do not are filtered by all of them.

⚠

A programmatic SEO project in 2026 should be designed simultaneously for human users, classical Google ranking, and AI agent citation. Optimizing only for one of the three is a recipe for either failure today (humans bounce, conversion is zero) or failure in a future update (the algorithm catches up).

The decision framework, in order

When a client asks us about a programmatic SEO project, we walk this in this exact order:

Step 1: What is the user actually trying to accomplish? Write the query the user would type. Write the answer they need. Write the alternatives they have today. If the alternatives are good enough, do not build the page.

Step 2: What is the underlying dataset? Where does it come from, how fresh is it, how proprietary is it, how hard is it to maintain? If the answer is "we are going to scrape Google Maps once," the project is dead before it starts.

Step 3: What is the per-page differentiation? What changes between pages? If the answer is "the city name and the headline," the project is scaled content. If the answer is "all the structured attributes plus a specific summary plus the relevant local entities," the project might work.

Step 4: How many pages, realistically? Long-tail queries with meaningful volume are scarcer than they look. Most categories support 200 to 2,000 useful programmatic pages, not 50,000. Be skeptical of plans for tens of thousands of pages on a topic that does not warrant them.

Step 5: What is the maintenance plan? Who refreshes the data, on what cadence, with what monitoring? A programmatic SEO project without a maintenance plan is a one-shot publish that decays.

Step 6: What is the risk concentration? If this channel disappears, what happens to the business? If the answer is "we are out of business," the project is too risky and the strategy is incomplete.

Projects that pass all six are worth doing. Projects that fail one or two might still be worth doing if the failed criteria can be fixed. Projects that fail three or more should be abandoned in favor of something else.

The implementation pattern we use

For the projects we do build, the technical pattern is consistent and not particularly exotic.

The data layer is a database (Postgres typically, sometimes Airtable for smaller projects) or a structured data source like a Shopify Metaobject collection or a Google Sheet. Critically, the data is owned by the business, not scraped on the fly. Scraped data dies; owned data compounds.

The render layer is a static site generator (Next.js with on-demand revalidation, Astro, Hugo for high-page-count projects, or Hydrogen if it lives inside Shopify) that pulls from the data layer at build time and produces static HTML. We avoid client-side rendered programmatic pages; they are slower, expensive to crawl, and rank worse.

The internal linking is generated algorithmically but constrained to genuinely related pages. We compute similarity scores between pages (by shared attributes, geographic proximity, category overlap) and limit each page's outbound links to its top 8 to 12 related pages. No footer link blocks, no exhaustive "see also" lists.

The refresh pipeline runs on a schedule appropriate to the data. Pricing data refreshes daily. Geographic data refreshes monthly. Category-level statistics refresh quarterly. We monitor for changes and rebuild affected pages, not the entire site.

Observability is non-negotiable. We track per-page traffic, conversion, and inclusion in AI citations. Pages that consistently underperform are reviewed, improved, or removed. Programmatic SEO sites that never prune their own underperformers accumulate dead weight that eventually drags the whole site.

FAQ

Is programmatic SEO worth it in 2026?

For projects that pass the six-test framework above, yes. For projects that do not, no. The blanket answers ("yes, it still works" or "no, Google killed it") are both wrong because they ignore the underlying property: programmatic SEO is a delivery mechanism. Whether the delivery mechanism succeeds depends entirely on what is being delivered.

How many pages should I publish?

As many as the data justifies. Most categories support a few hundred to a few thousand. If your data only supports 500 useful pages, publishing 50,000 is worse than publishing 500. The empty pages drag down the good ones.

Will AI search make programmatic SEO obsolete?

It changes the bar but does not eliminate the channel. AI agents need sources to cite. Sources with structured, verifiable data are exactly what they prefer. The pages that survive in classical Google also tend to be the pages cited by AI agents. The two filters point in the same direction.

Can I generate the page-specific content with AI?

Yes, with a major caveat. AI-generated text is fine when it is summarizing or explaining the page's underlying structured data. It is not fine when it is the page's only content. The bar is whether the content reflects something specific and true about that page, not whether a human or an AI wrote it.

How long until I see results?

Programmatic SEO typically takes 4 to 9 months to see meaningful traffic, with the curve continuing to compound for 18 to 36 months in the projects that succeed. Anyone promising faster results is either lucky, lying, or about to get penalized.

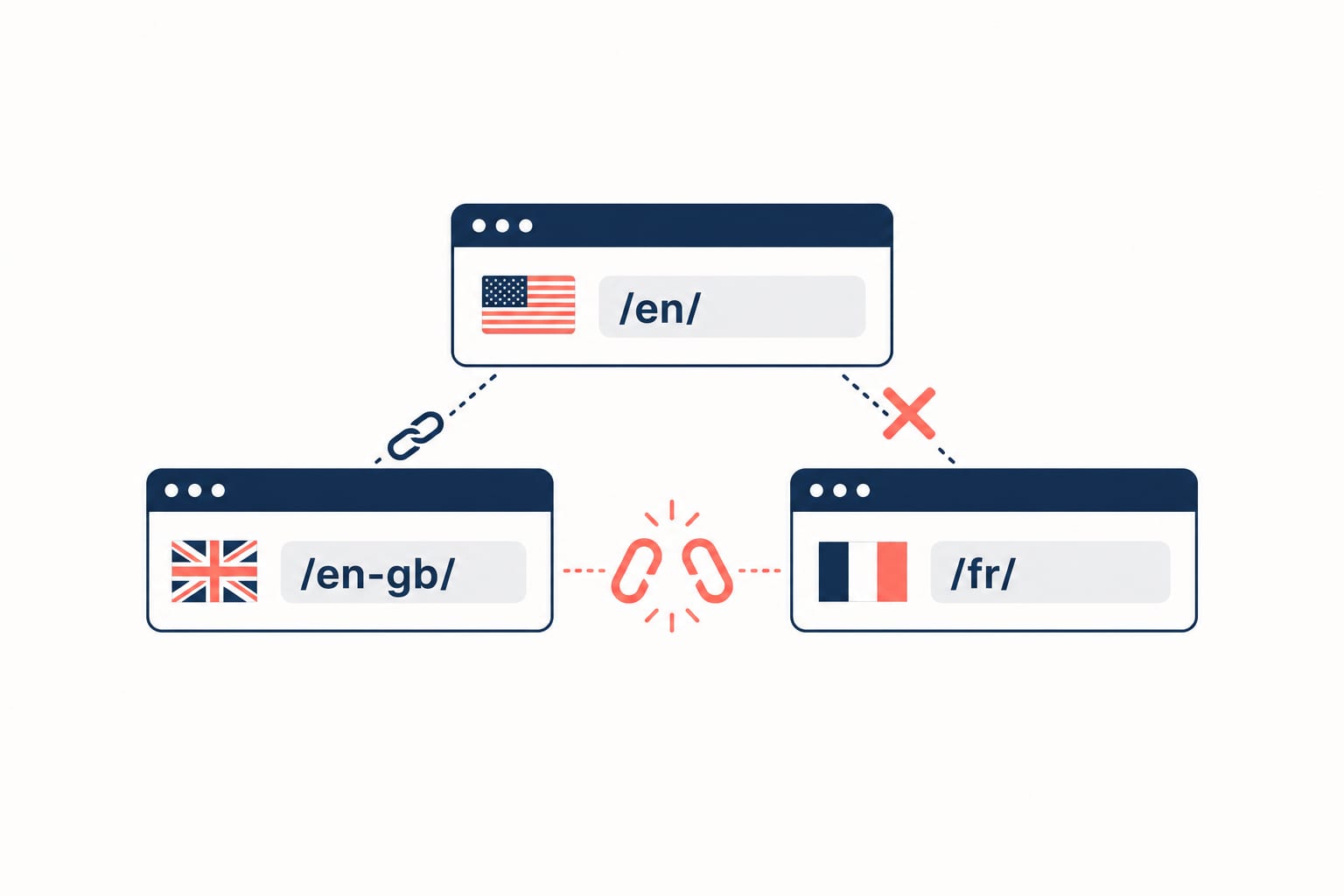

What about hreflang and international programmatic SEO?

Adding language and country variants multiplies the page count and the complexity. We covered the hreflang setup in hreflang on Shopify for ecommerce, and the principles transfer. The key constraint: translations must be real translations against a verified local dataset. Auto-translated programmatic pages for markets where the data is not validated are exactly the pattern Google demoted in 2024.

Where this fits in our broader stack

Programmatic SEO is one channel in a wider technical SEO and content strategy. It pairs well with schema markup (which gives each programmatic page structured data for AI agents) and a clean metaobject architecture when the underlying dataset lives in Shopify.

If you are weighing whether a programmatic SEO project makes sense for your business, get in touch. We start every engagement with the six-test framework above. About half the conversations end with "this project is not a fit, here is what to do instead." The other half we build, and they tend to compound for years because we filtered out the structurally unsound ones at the start.

Related posts

View all articles

Technical SEOApr 14, 2026

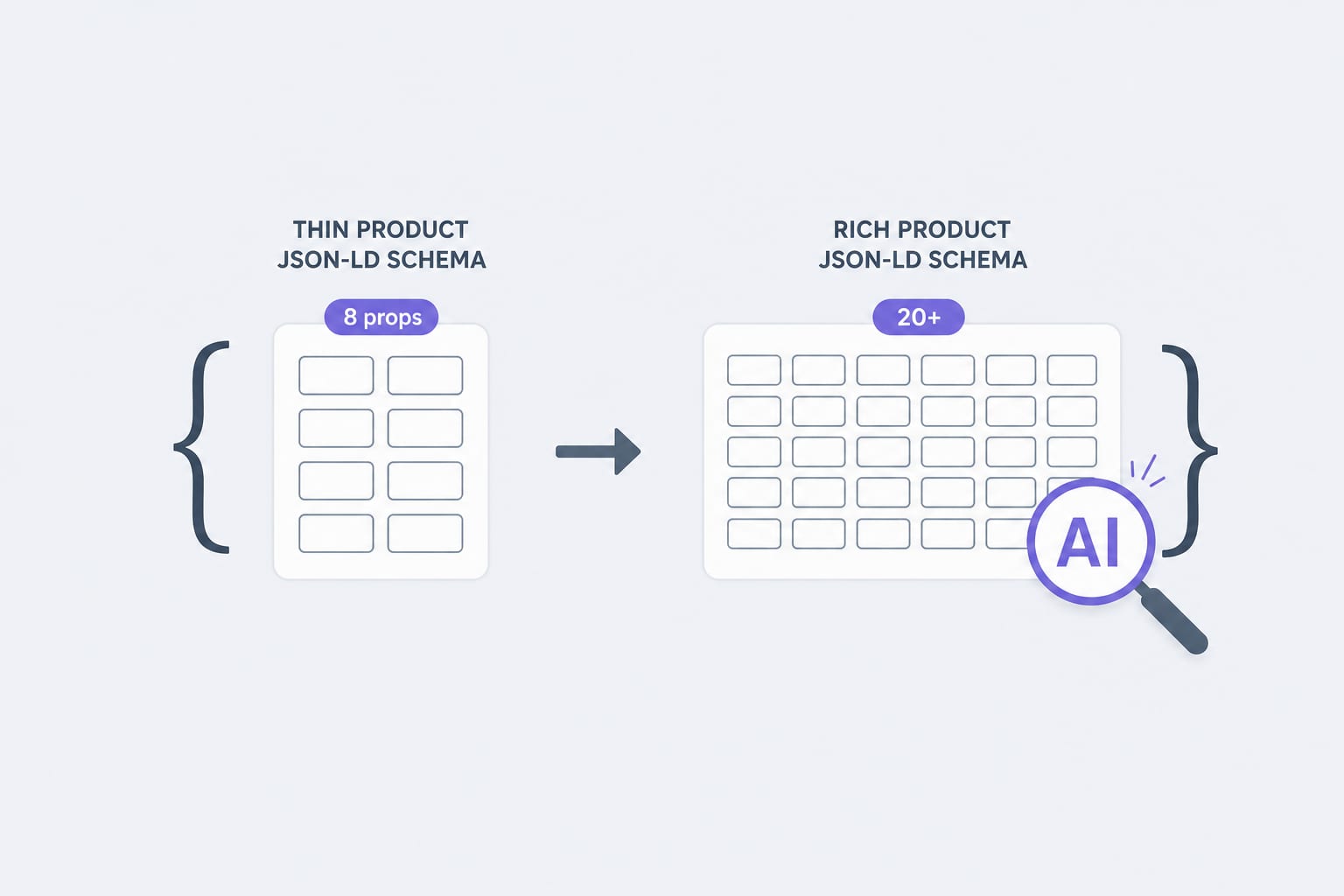

Schema Markup for Shopify in 2026: The JSON-LD Properties AI Agents Actually Read

Traditional Product schema uses 8 to 12 properties. AI agents lean on 20 or more. Here is the property list, the validation rules, and the implementation pattern we use for Shopify stores in 2026.

12 min read

Technical SEOApr 7, 2026

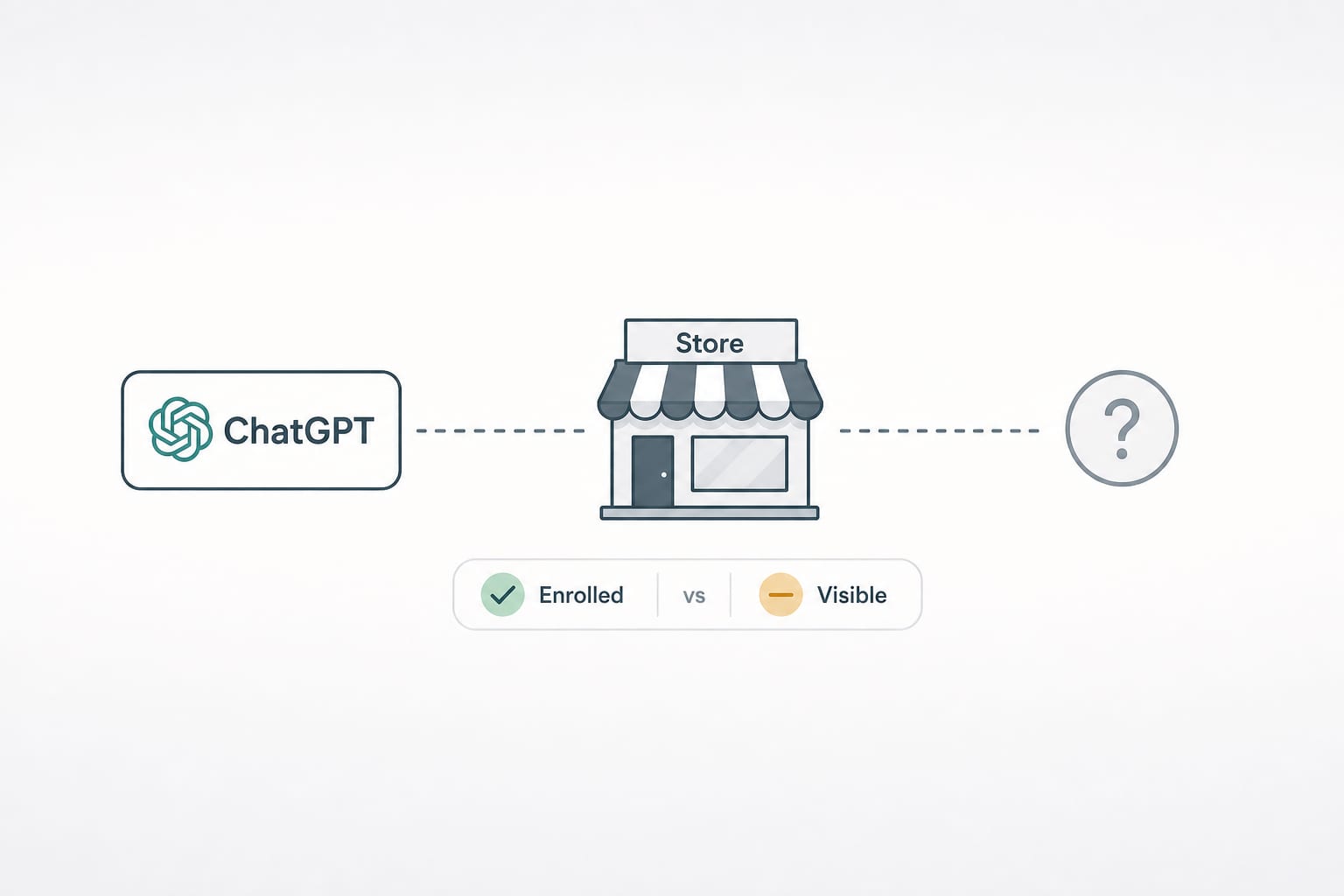

Is Your Shopify Store Discoverable Inside ChatGPT? A 10-Minute Audit for Agentic Storefronts

On March 24, 2026, Shopify made 5.6 million stores discoverable to AI agents by default. Here is the 10-minute audit we run to tell whether your store is actually getting recommended, or just enrolled.

13 min read

Technical SEOFeb 3, 2026

Hreflang on Shopify: The International SEO Setup That Actually Works

A senior engineer's hreflang setup guide for Shopify in 2026. When to use Markets vs multi-store, how canonicals interact with hreflang, the validation playbook, and the migration trap nobody warns you about.

11 min read